How does Batch Normalization Help Optimization?

Batch normalization (BatchNorm) is a widely adopted technique that enables faster and more stable training of deep neural networks. However, despite its pervasiveness, the exact reasons for BatchNorm’s effectiveness are still poorly understood.

In this talk, we take a closer look at the underpinnings of the BatchNorm’s success. In particular, we examine the popular belief that the root of BatchNorm’s effectiveness is due to reduction of an effect called internal covariate shift (ICS). We then explore the connection between BatchNorm, ICS, and the optimization landscape of deep neural networks.

- Series:

- Microsoft Research Talks

- Date:

- Speakers:

- Andrew Ilyas

- Affiliation:

- MIT

Series: Microsoft Research Talks

-

-

-

-

Galea: The Bridge Between Mixed Reality and Neurotechnology

Speakers:- Eva Esteban,

- Conor Russomanno

-

Current and Future Application of BCIs

Speakers:- Christoph Guger

-

Challenges in Evolving a Successful Database Product (SQL Server) to a Cloud Service (SQL Azure)

Speakers:- Hanuma Kodavalla,

- Phil Bernstein

-

Improving text prediction accuracy using neurophysiology

Speakers:- Sophia Mehdizadeh

-

-

DIABLo: a Deep Individual-Agnostic Binaural Localizer

Speakers:- Shoken Kaneko

-

-

Recent Efforts Towards Efficient And Scalable Neural Waveform Coding

Speakers:- Kai Zhen

-

-

Audio-based Toxic Language Detection

Speakers:- Midia Yousefi

-

-

From SqueezeNet to SqueezeBERT: Developing Efficient Deep Neural Networks

Speakers:- Sujeeth Bharadwaj

-

Hope Speech and Help Speech: Surfacing Positivity Amidst Hate

Speakers:- Monojit Choudhury

-

-

-

-

-

'F' to 'A' on the N.Y. Regents Science Exams: An Overview of the Aristo Project

Speakers:- Peter Clark

-

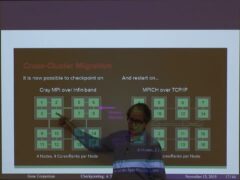

Checkpointing the Un-checkpointable: the Split-Process Approach for MPI and Formal Verification

Speakers:- Gene Cooperman

-

Learning Structured Models for Safe Robot Control

Speakers:- Ashish Kapoor

-

-