They make restaurant recommendations, help us pay bills, and remind us of appointments. Many people have come to rely on virtual assistants and chatbots to perform a wide range of routine tasks. But what if a single dialog agent, the technology behind these language-based apps, could perform all these tasks and then take the conversation further? In addition to providing on-topic expertise, such as recommending a restaurant, it could engage in a conversation about the history of the neighborhood or a recent sports game, and then bring the conversation back on track. What if the agent’s responses continually reflect the latest world events? And what if it could do all of this without the need for any additional work by the designer?

With GODEL (opens in new tab), this may not be far off. GODEL stands for Grounded Open Dialogue Language Model, and it ushers in a new class of pretrained language models that enable both task-oriented and social conversation and are evaluated by the usefulness of their responses.

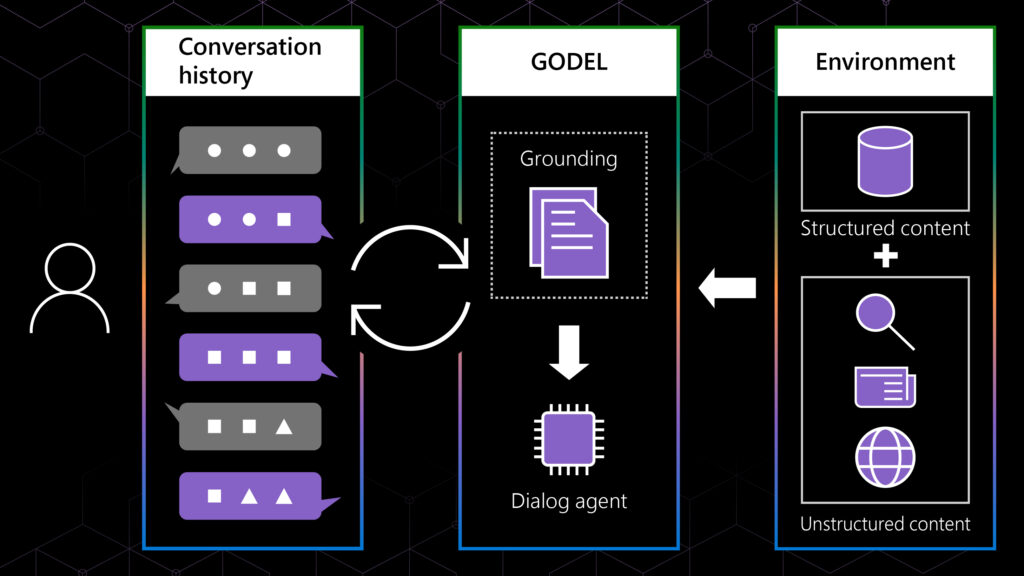

Pretrained language models are among the engines that power conversational AI, the technology that underlies these dialog agents. They can either be task-oriented (“give me a job, and I’ll do it”) or engage in a conversation without a specified outcome, known as open-domain or chit-chat. GODEL combines both these capabilities, giving dialog agents the ability to generate responses based not just on the context of the conversation, but also on external information, content that was not part of the dataset when the model was trained. This includes both structured content, such as information stored in databases, and unstructured content, such as restaurant reviews, Wikipedia articles, and other publicly available material found on the web. This explains how a simple task-based query about restaurant recommendations can evolve into a dialog about ingredients, food, and even cooking techniques—the kind of winding path that real-world conversations take.

In 2019, the Deep Learning and Natural Language Processing groups at Microsoft Research released DialoGPT, the first large-scale pretrained language model designed specifically for dialog. This helped make conversational AI more accessible and easier to work with, and it enabled the research community to make considerable progress in this area. With GODEL, our goal is to help further this progress by empowering researchers and developers to create dialog agents that are unrestricted in the types of queries they can respond to and the sources of information they can draw from. We also worked to ensure those responses are useful to the person making the query.

In our paper, “GODEL: Large-Scale Pre-training for Goal-Directed Dialog (opens in new tab),” we describe the technical details underlying GODEL, and we have made the code available on GitHub (opens in new tab).

A grounded model

One of GODEL’s key features is the flexibility it provides users in defining their model’s grounding—the sources from which their dialog agents retrieve information. This flexibility informs GODEL’s versatility in diverse conversational settings. If someone were to inquire about a local restaurant for example, GODEL would be able to provide specific and accurate responses even though that venue may not have been included in the data used to train it. Responses would vary depending on whether the grounding information is empty, a snippet of a document, a search result (unstructured text), or information drawn from a database about the restaurant (structured text). However, each response would be appropriate and useful.

In addition to specificity, grounded generation helps keep models up to date, as the grounded text can incorporate information that may not have been available at the time the model was trained. For example, if a model were developed before the 2022 Winter Olympics, GODEL would be able to provide details on those games and a list of winners even though all the data available to train it predates that event.

Spotlight: blog post

Broad application of GODEL

Another main feature of GODEL is its wide range of dialog applications. While its predecessor, DialoGPT, and other prior pretrained models for dialog have mostly focused on social bots, GODEL can be applied to a variety of dialogs, including those that are task-oriented, question-answering, and grounded chit-chat. In the same conversation, GODEL can produce reasonable responses for a variety of query types, including general questions or requests for specific actions.

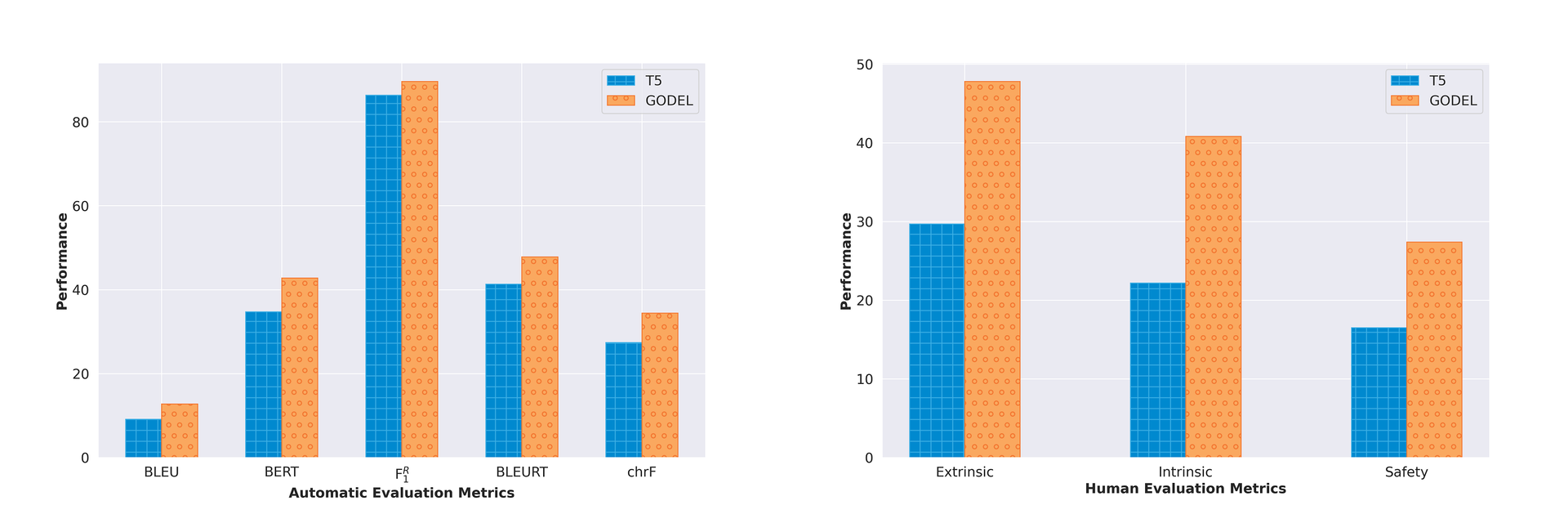

In addition, GODEL’s responses have been evaluated for their helpfulness. In our paper, we show that evaluation is done more reliably on datasets that are goal-directed, and that people generally agree on which responses are better when asked to judge their utility towards achieving certain goals. Equipped with this robust evaluation setup, we compared our model against several strong baselines and state-of-the-art approaches and show that GODEL is superior in terms of both human and automatic evaluation, as indicated in Figure 1. The paper describes extensive experiments against other state-of-the-art pretrained language models and demonstrates that performance gains are even larger in these cases.

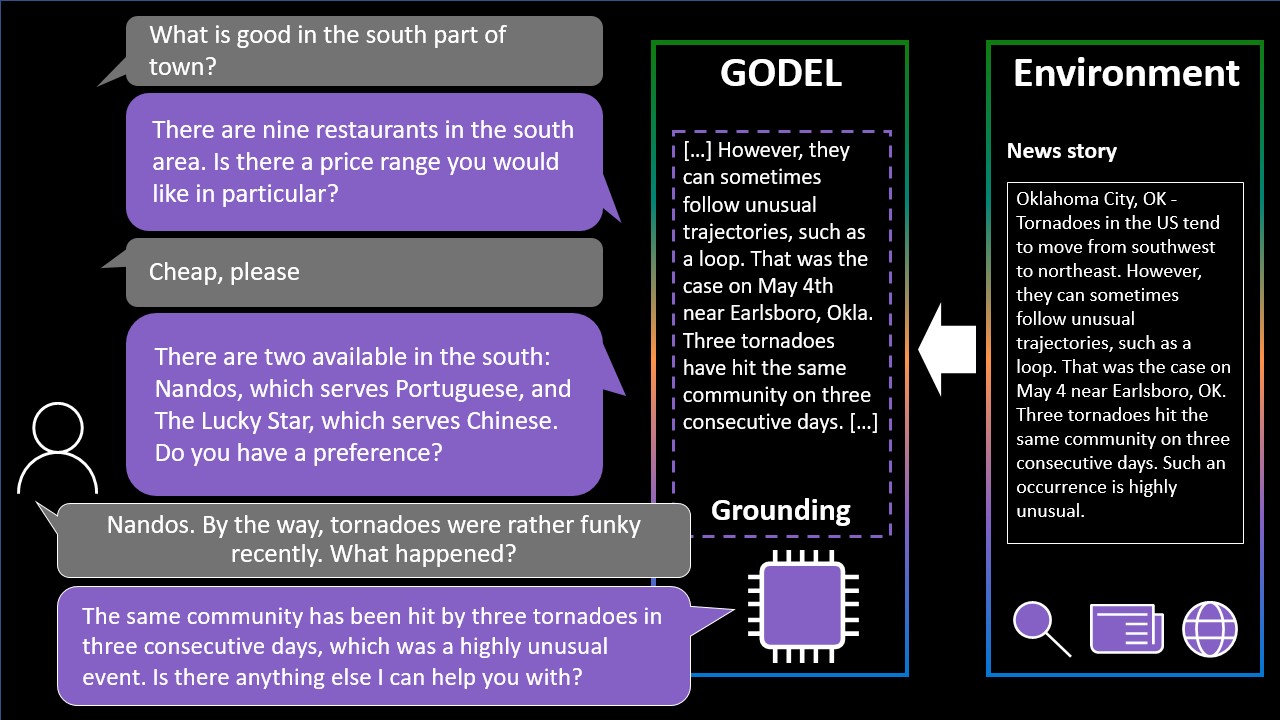

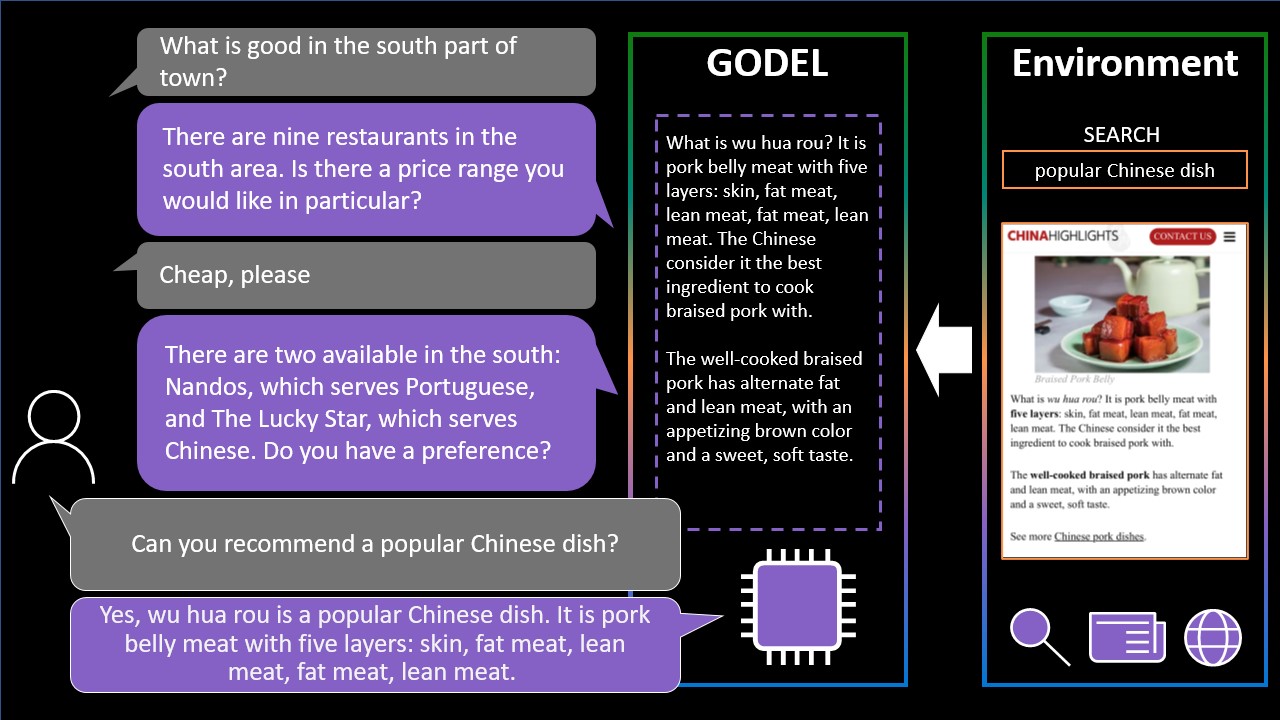

The following examples illustrate different dialog scenarios where GODEL uses a variety of sources to respond to identical user queries.

-

-

This example illustrates how GODEL responds in a task-oriented setting in which the model is connected to the components of a traditional goal-oriented dialog systems, such as a database. In this case, the relevant environment contains structured information, a database returning two restaurants relevant to the current conversation.

-

This example illustrates how GODEL responds in a task-oriented setting in which traditional components of task-oriented dialog systems are not available. In this case, GODEL retrieves a restaurant review via a search engine. The response reflects both the context of the conversation and a snippet of the retrieved text, a restaurant review.

-

GODEL available as open source

To advance research, we believe it is crucial to make code and models publicly available, and we have released GODEL as fully open source (opens in new tab). We have made three versions of GODEL available: base, large, and extra-large. We are also including the code needed to retrain all pretrained models and to fine-tune models for specific tasks: the CoQA dataset, intended for conversational question-answering; the Wizard of Wikipedia and Wizard of the Internet datasets, aimed at information-seeking chats; and MultiWOZ is for task-completion dialogs.

We hope GODEL helps numerous academic research teams advance the field of conversational AI with innovative dialog models while eliminating the need for significant GPU resources. We plan to continuously improve GODEL and make more models available to the research community. Please visit our project page to learn more about the GODEL project and new releases.

Acknowledgements

We would like to thank our fellow colleagues at Microsoft Research who contributed to this work and blog post: Bill Dolan, Pengcheng He, Elnaz Nouri, Clarisse Simoes Ribeiro.